You define

- Business rules and transformations

- Metrics and analytical logic

- What the data should represent

The data path from SQL to production is still manual and fragile — even when AI writes the SQL in seconds. BoltPipeline governs that entire path across seven pillars: validation, lineage, profiling, certification, approval workflows, drift detection, and operations. One platform. Nothing reaches production without earning it.

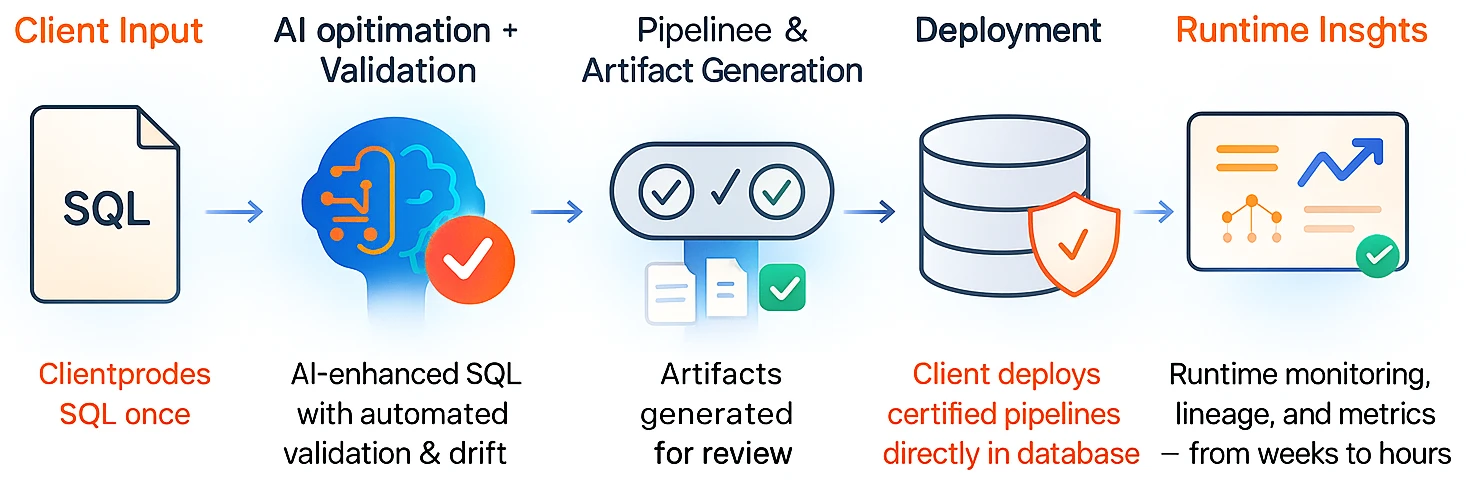

BoltPipeline takes SQL — written by your team or generated by AI — and turns it into a validated, certified, governed data pipeline that runs directly inside your database.

The platform automates the work between SQL authoring and production: validation, lineage, drift detection, approval workflows, certification gates, and operations — without moving data or introducing proprietary runtimes.

You write SQL. The platform handles compilation, validation, lineage, and deployment.

Author SQL business rules

Analyze structure, semantics, and dependencies

Profile data and establish baselines

Validate changes and detect drift with impact

Generate certified, executable pipeline artifacts

You own business logic. BoltPipeline owns the engineering path to production.

BoltPipeline continuously validates SQL pipelines as they are implemented and executed — before anything reaches production.

This includes schema checks, join safety, profiling, lineage, drift detection, certification gates, and downstream impact — all enforced consistently by the platform.

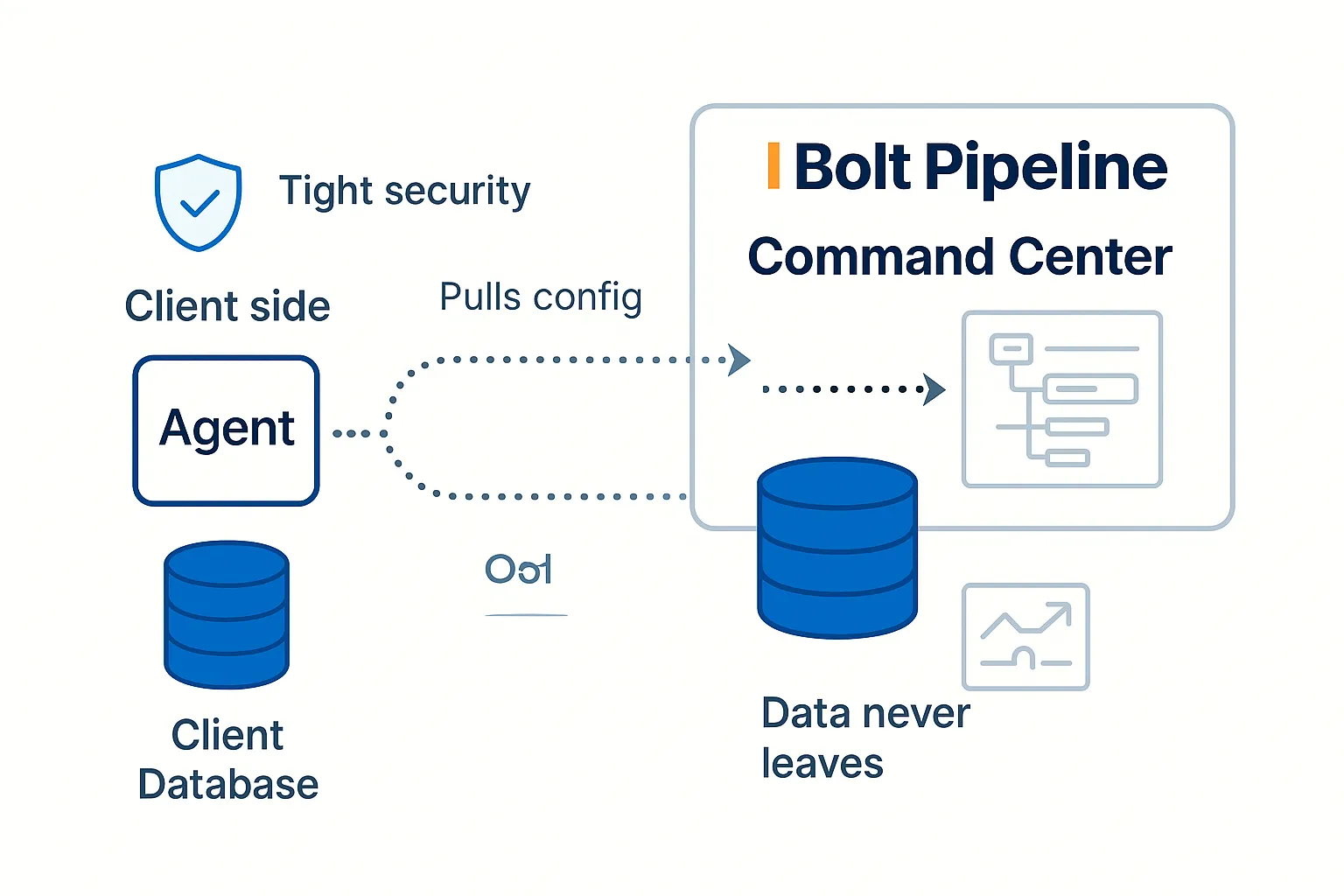

The BoltPipeline Agent runs inside your environment, close to your data. It implements and executes pipeline artifacts derived from SQL.

The Command Center coordinates validation, certification, governance, and visibility using metadata and operational signals — while your data never leaves your boundary.

Walk through a real pipeline using your schemas and business rules — no migration, no lock-in, no data leaves your database.

SQL-first pipelines, validated and governed — executed directly inside your database.

No new DSLs. No fragile orchestration. Just SQL with built-in validation, lineage, and governance.