Schemas & Semantics

- Type and compatibility checks

- Contract verification for renamed or removed fields

- Safe materialization across models

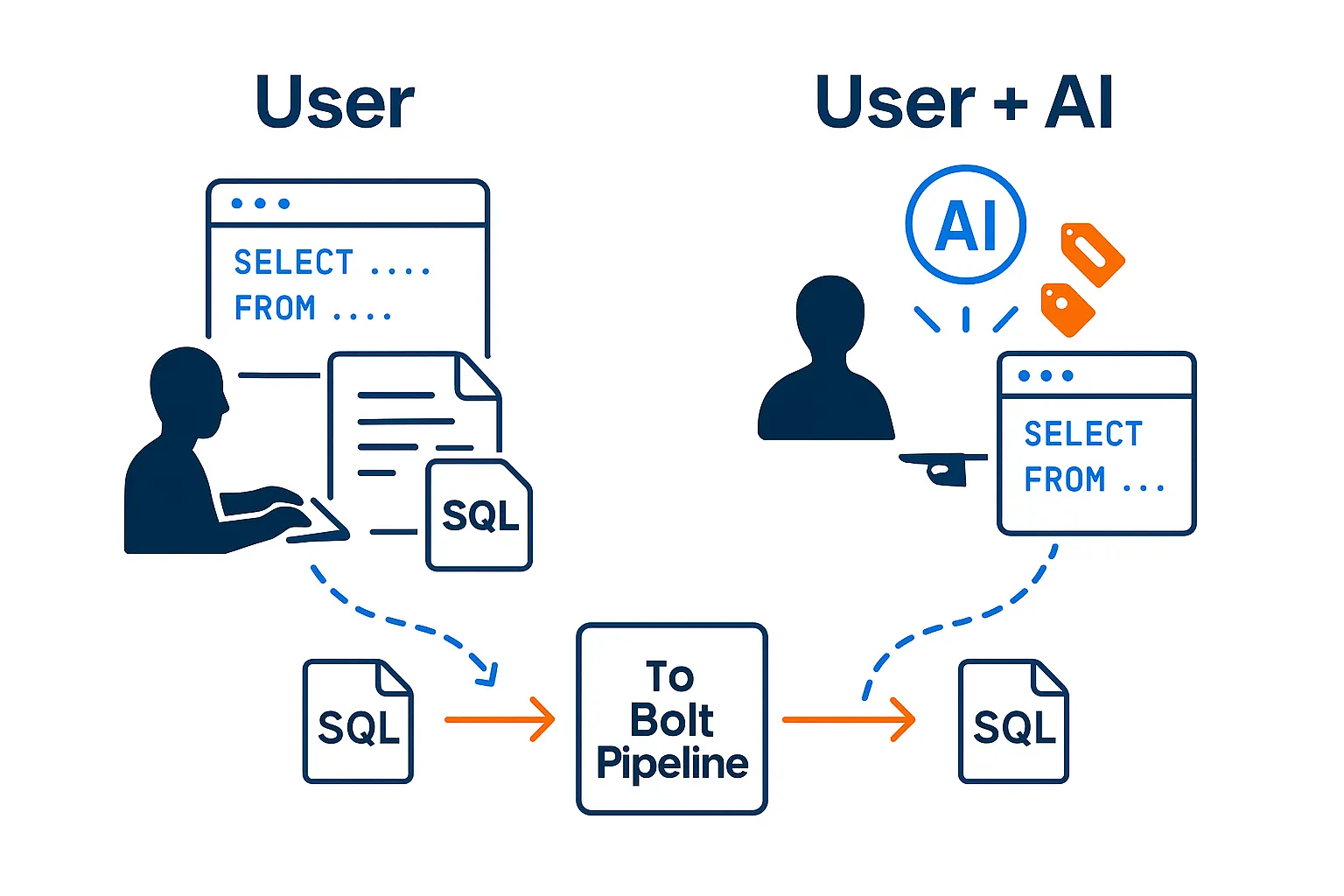

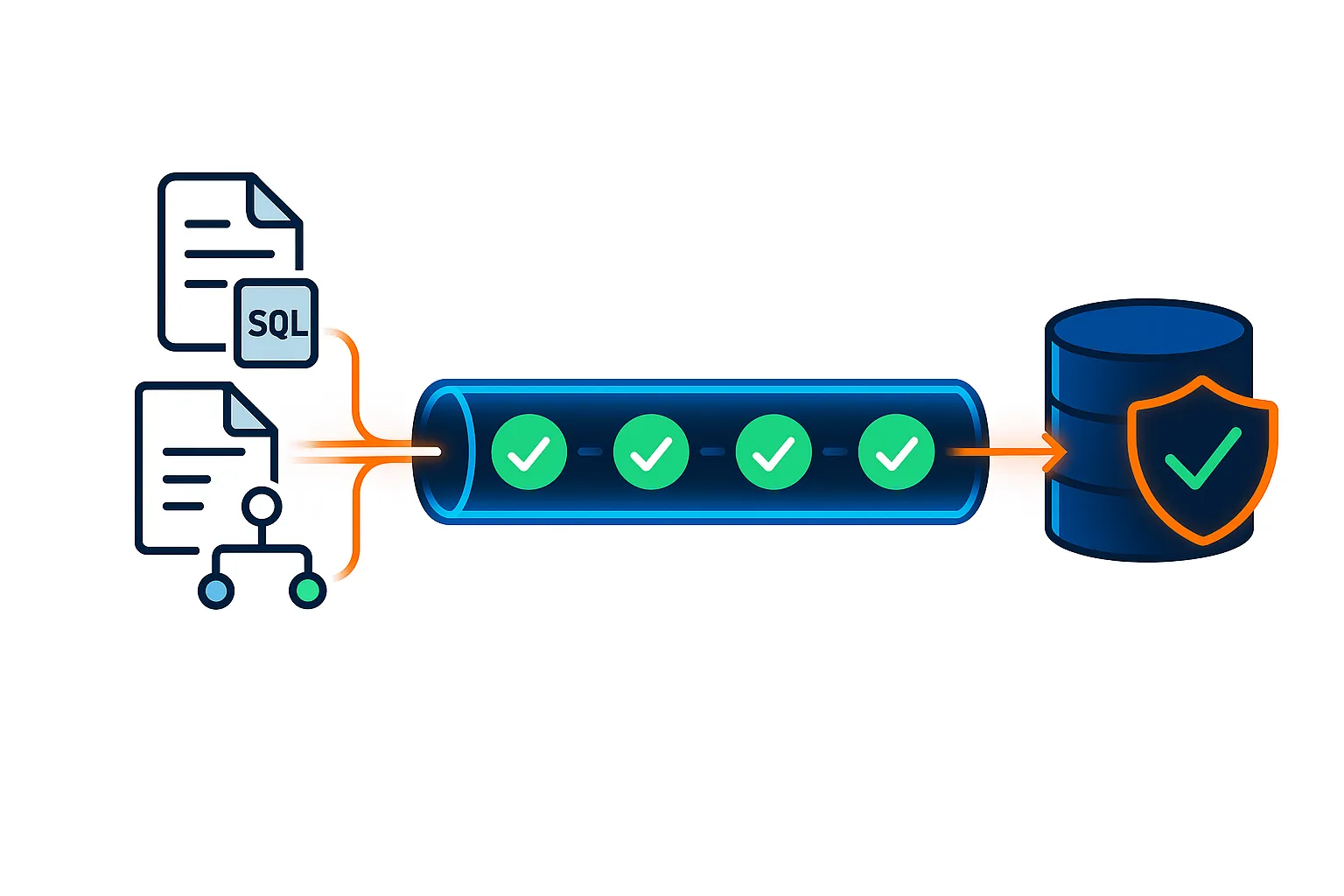

You write SQL — or your AI does. From there, the lifecycle takes over: SQL is parsed, validated, certified against your live database, and only then deployed to run. Every stage produces real artifacts. Every gate is enforced.

You write SQL. The platform handles compilation, validation, lineage, and deployment.

Author SQL business rules

Analyze structure, semantics, and dependencies

Profile data and establish baselines

Validate changes and detect drift with impact

Generate certified, executable pipeline artifacts

A simple, repeatable flow that separates authoring from implementation so teams move faster without sacrificing trust.

Teams express business logic and transformations in plain SQL — the source of truth. No DSLs, no YAML-heavy configs. Optional AI assistance to draft or refine safely.

BoltPipeline analyzes intent, validates correctness, and generates certified pipeline artifacts. 30+ rule validation, column-level lineage, and profiling — before anything ships.

Run pipelines directly inside your database. Drift detection, health scoring, and governance continue after deployment. You own runtime and scheduling.

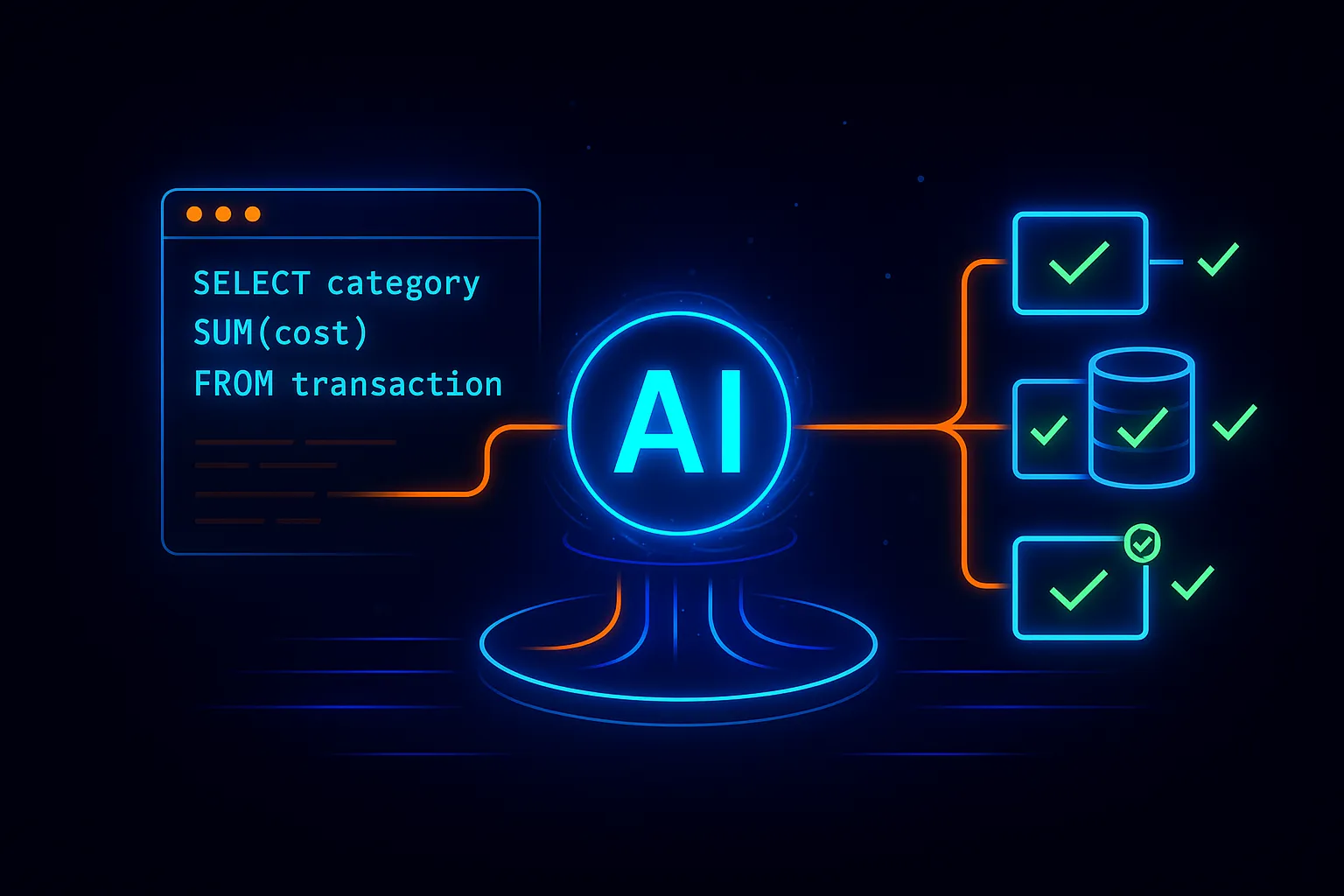

BoltPipeline starts where your team already is: SQL. Engineers and analysts describe what the data should mean, not how to wire pipelines by hand.

This is where BoltPipeline does the heavy lifting. The platform analyzes SQL intent, validates correctness, and surfaces issues before anything ships.

The output is a set of certified artifacts: executable SQL, validation results, lineage, profiles, and audit metadata — portable and customer-owned.

BoltPipeline does not replace your runtime. You deploy and operate pipelines where your data lives.

BoltPipeline provides visibility, safety signals, and governance context — without taking control away from your team.

Every stage produces real artifacts and enforces real gates. Nothing is optional.

SQL Compilation

Parse, resolve, generate

SCD Automation

Tag it. We build the MERGE.

30+ Rule Validation

Hard gate before production

Column-Level Lineage

Source to target, every column

Profiling & Baselines

Know your data before you ship

Drift & Health Scoring

Continuous after deployment

BoltPipeline continuously validates SQL pipelines as they are implemented and executed — before anything reaches production.

BoltPipeline reduces pipeline failures, review cycles, and operational overhead — while giving leadership confidence that data products are governed, explainable, and safe to scale.

Speed

Weeks to hours

From SQL to certified, production-ready pipelines

Trust

Built in

Certification gates, lineage, and explainers at every stage

Flexibility

No lock-in

SQL-first, portable ANSI artifacts you own

Cost

In-DB only

No external compute, no data movement, fewer incidents

Compliance

By design

Data stays in boundary with audit-ready evidence

Walk through a real pipeline using your schemas and business rules — no migration, no lock-in, no data leaves your database.